Technologies

- Unity - The game engine and development platform.

- FMOD - The audio engine that powers our game's sound.

- HTC Vive - The VR system that took our game to the next level.

- Arduino - The toolkit to implement our custom controller.

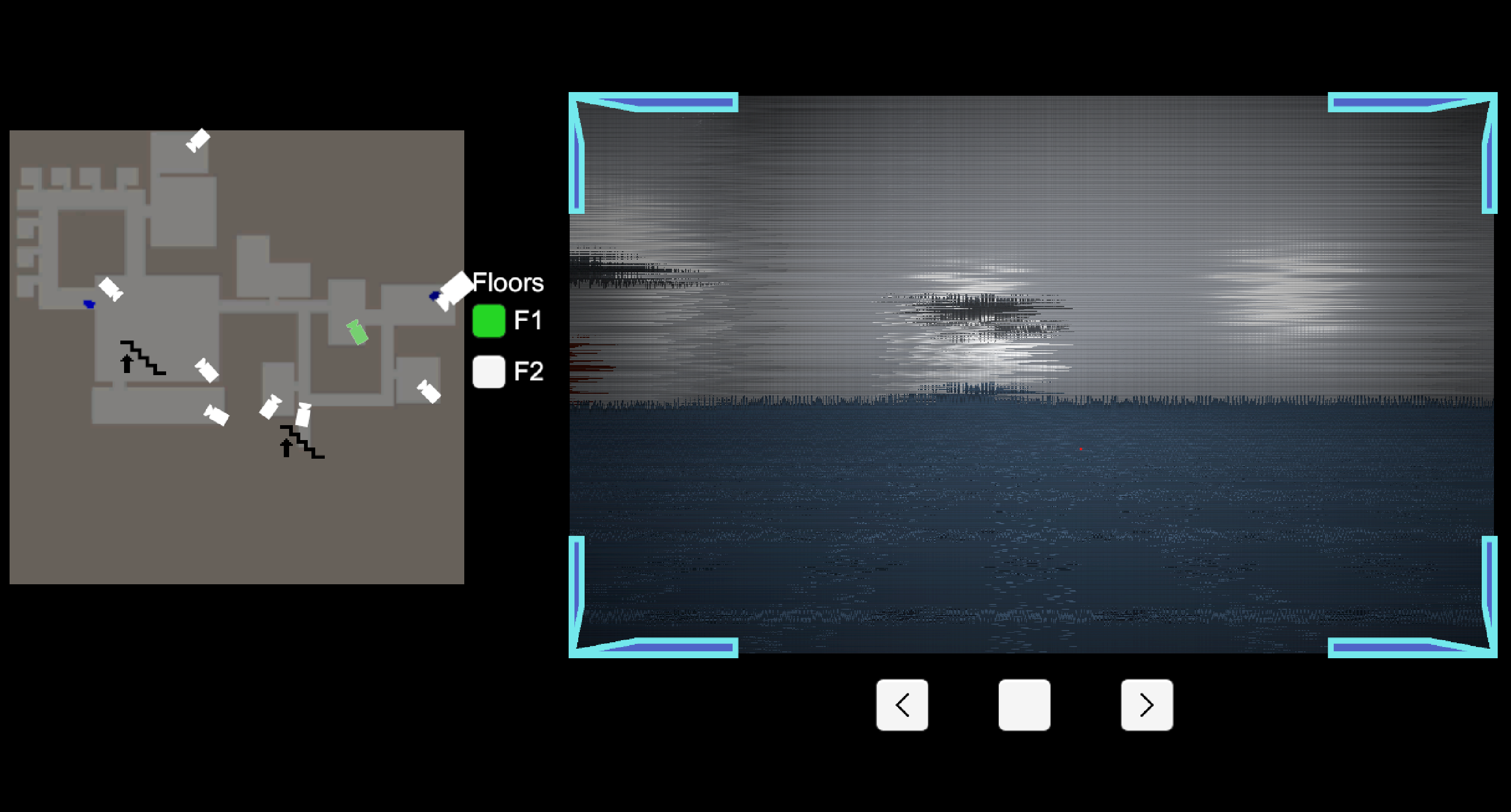

COVRT, our first project, is a virtual reality experience where you and your teammate must work hand-in-hand to solve intricate puzzles and unlock the secrets of a mysterious facility.

We wished to explore the potential of interaction within the immersive realm of virtual reality. We not only explored the technology powering the simulation, but also, a variety of input devices, and the dynamic interactions between players within the game world.

Download on Github

There are multiple alternatives to work with audio in the context of game development. Common solutions include Unity's built-in audio engine, FMOD or WWISE. We decided to use FMMOD, as a middle ground between the simplicity of Unity's built-in engine and the complexity of WWISE. In this tool, one can create different events and parameters, and use them to trigger sounds and music. We exported these sounds into a bank asset that we could then import into Unity. We also used the help of other tools, such as Audacity to edit and mix sounds, and some VST plugins to add effects to the audio.

From tight deadlines to broken prototypes, we faced many challenges during our development process. We learned to distribute tasks and improvise solutions to overcome these difficulties:

Originally, the operator interacted with the game through a mouse and keyboard, but soon after, we sought a more advanced solution for our graphics and interaction-focused course. We created a controller with an Arduino, featuring a switch for floor switching, a joystick, button for camera selection, and two potentiometers for camera adjustment. Connecting the components was straightforward, but Unity-Arduino communication posed challenges. We implemented a Python server as a mediator. Originally planning a 3D-printed shell, we adapted due to printer restrictions, creating a sturdy black cardboard box that went unnoticed by users until pointed out.

Among many, we faced three major obstacles during our development process.

As with any other project, especially of a fast-paced nature, we learned many valuable lessons. We practiced communication and work distribution, and we gained considerable insight into the development process of a VR game. We also understood how to estimate the effort required to implement a feature, and how to prioritize tasks based on their importance.

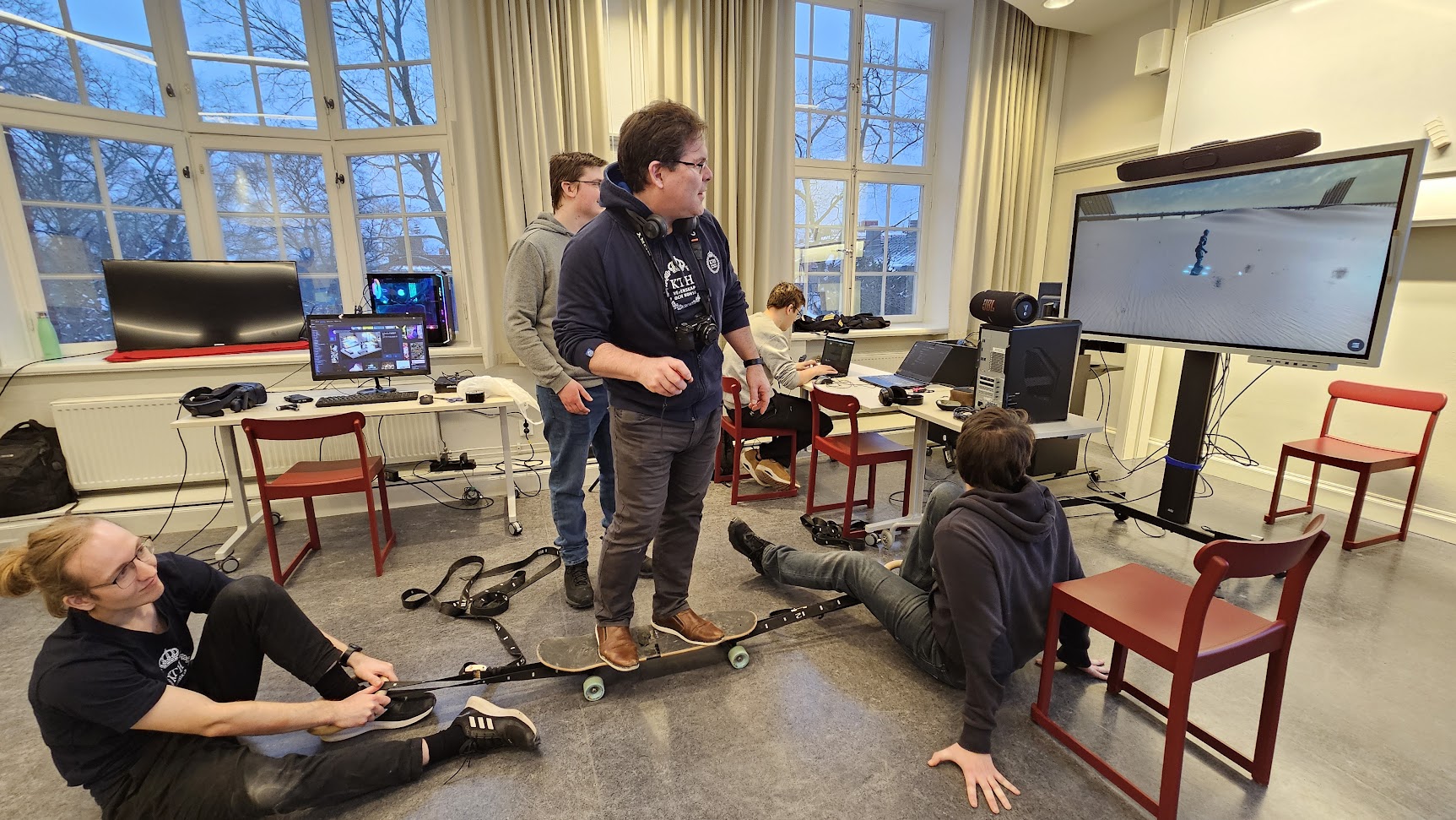

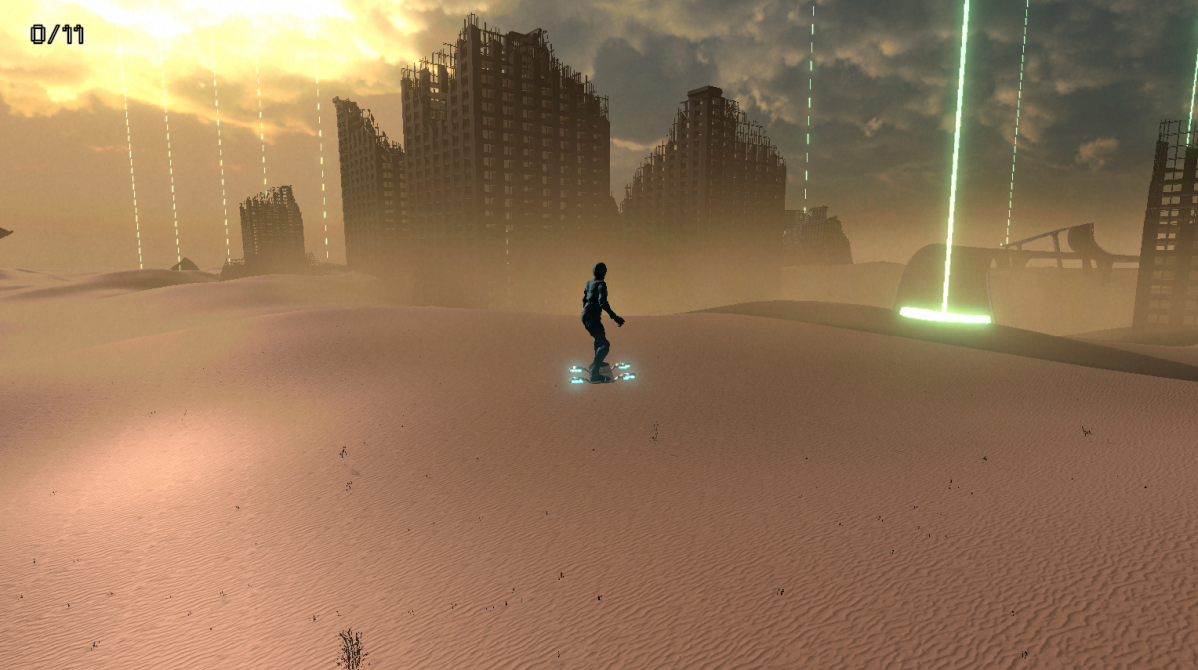

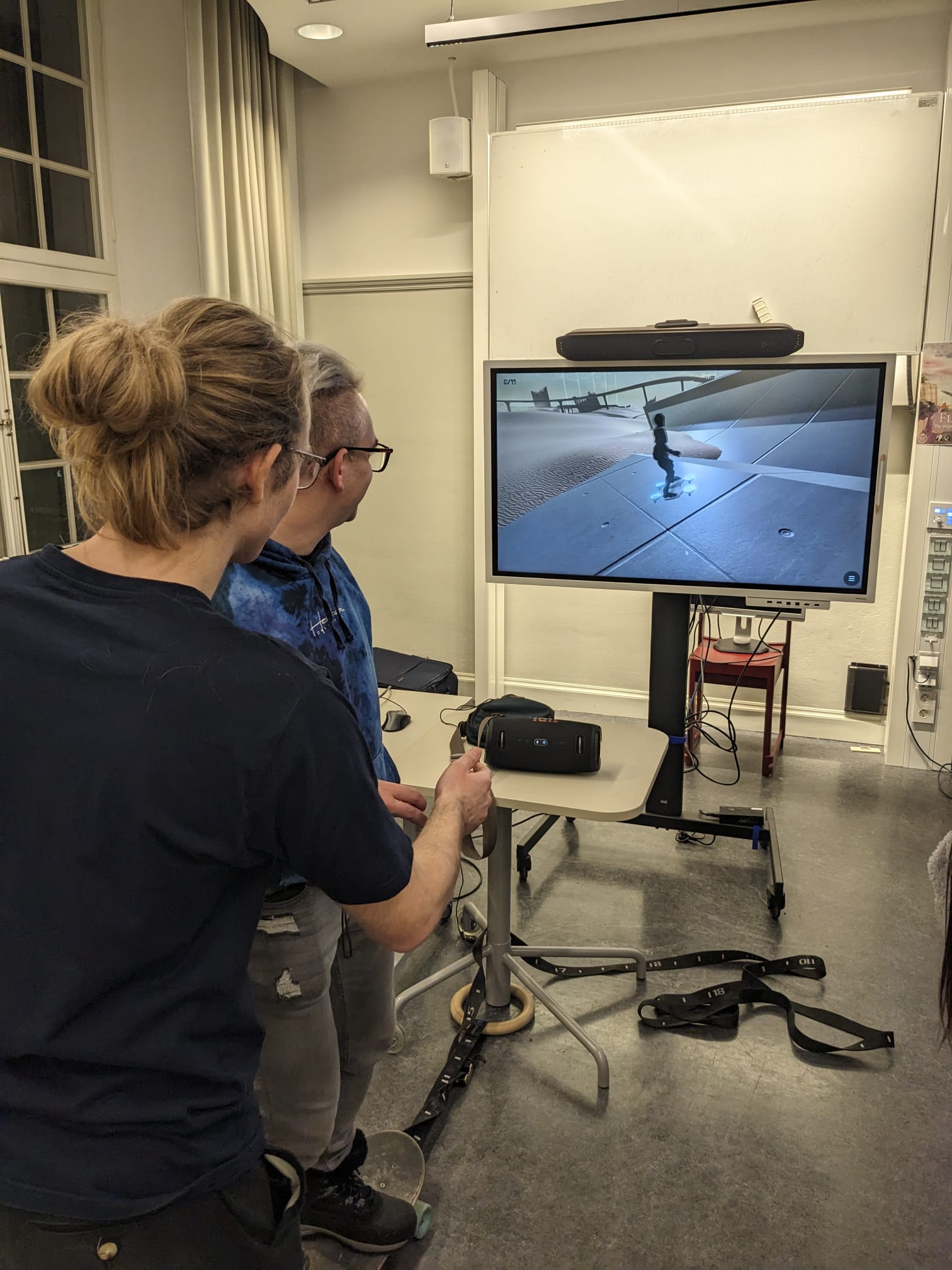

HOVRT, our second project, is a sandbox game where you can explore a vast desert and perform tricks with a hoverboard. The player will find different buildings, ancient ruins and maybe even a few robots.

For this second project we tried to narrow down the scope of the game, put a greater focus on detailed graphics, and we also explored physical interaction through the hoverboard.

Download on Github

The initial vision for this project had a lot of dynamic light sources, so it was decided early on that we'd be using deferred rendering to handle them. This added some challenge when it came to writing custom shaders such as the one handling interior mapping, as the process is less well documented than that of custom shaders for the forward rendering path. In the end, the number of light sources used didn't get that high, but learning about how the Universal Render Pipeline handles deferred shading, and how to work with it was a valuable experience.

With the acquired experience from our first project, we were able to iterate much faster and more efficiently. However, we faced different obstacles this time:

Opting for a simpler approach to detect skateboard tilt, we initially considered a Wi-Fi-enabled Arduino board based on past success. However, we discarded this idea, prioritizing the game's mechanics and graphics over delving into intricate Arduino programming and networking. Instead, we settled on affixing a phone to the skateboard's bottom and developed a straightforward Unity Android app to relay the phone's tilt updates to the game. Initially planning UDP broadcasting, we encountered issues with broadcast reception. Consequently, we resorted to a basic method—sending a packet to every IP address in the subnet.

The development was significantly easier, due to the expertise gained from our first project. However, we still encountered some obstacles:

Initially, we aimed for precise player avatar movement using a depth camera for skeleton tracking. However, due to deprecated or expensive SDKs, we turned to the Google MediaPipe Unity plugin, allowing webcam-based AI body tracking. Despite its complexity, integration challenges, and issues with high-level examples, we streamlined the feature to interpolate the avatar's hoverboard stance between predefined poses. Unfortunately, persistent plugin crashes on scene restart prevented its inclusion in the final game, despite attempts to resolve the issue.

We applied our takeaways from the previous project and were able to iterate faster and more efficiently. We realized how important it is to have a clear vision of the project and to be able to communicate it to the team. We also learned how to work with a new rendering pipeline, and how to integrate different devices into a single system.

We worked in parallel and everyone collaborated with each other to achieve the best possible result. Some of the roles we took were the following: